Most software houses are not suffering from a lack of tools. They suffer from fragmented execution. Proposals are written manually from scratch, estimations live in disconnected spreadsheets, QA firefights happen at the end of a sprint, and client reporting is assembled under pressure on Friday afternoon. Teams stay busy, but the system stays inefficient.

Well-designed AI automation does not replace specialists. It removes repetitive cognitive work so people can focus on architecture, product decisions, communication, and risk management. This guide provides a practical implementation path across the full delivery lifecycle: presales, discovery, engineering, QA, and reporting.

Why software house automation is now a margin strategy

Client expectations have shifted: faster delivery, better predictability, and transparent communication are baseline requirements. If your operating model is mostly manual, scale increases chaos and overhead.

- Manual effort scales linearly with project volume.

- Quality becomes person-dependent instead of system-dependent.

- Decision cycles slow down because context is distributed across tools.

AI automation addresses all three by standardizing inputs, accelerating first drafts, and shortening feedback loops.

A 4-layer framework for reliable AI automation

1) Knowledge layer

Centralized access to delivery standards, offer templates, architecture decisions, QA policies, and past project insights. Without this, model output lacks business context.

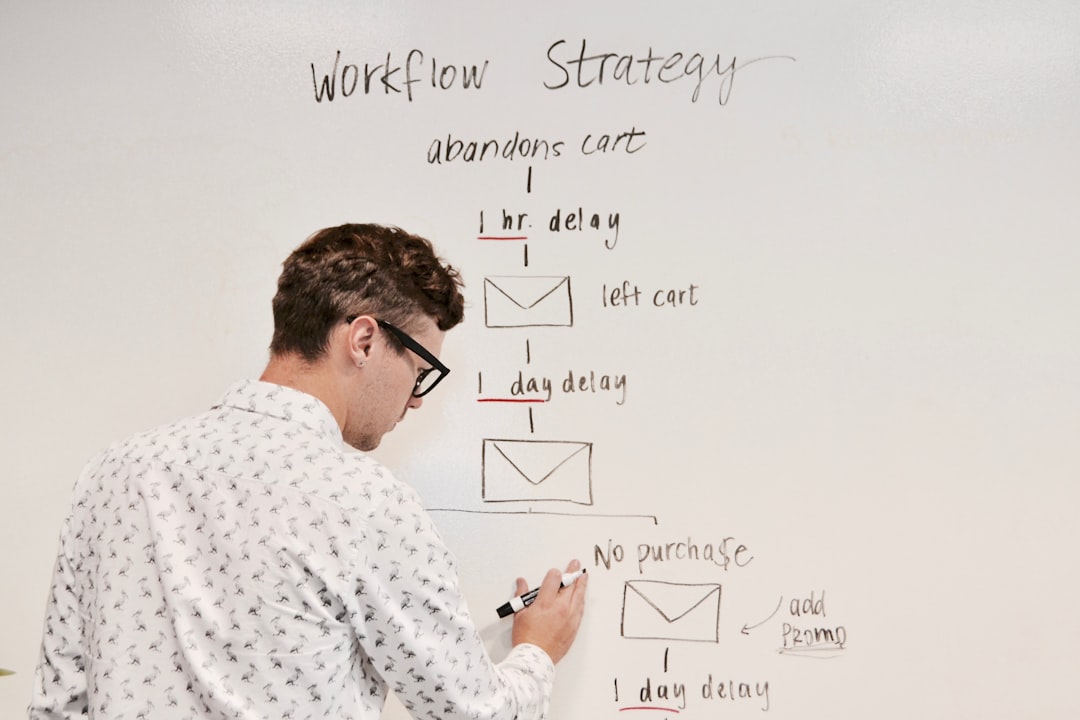

2) Workflow layer

Clear process orchestration: trigger → draft generation → human review → publish/send.

3) Quality layer

Validation gates: security checks, terminology rules, pricing constraints, and approval requirements.

4) Metrics layer

Operational measurement: lead time, revision cycles, escaped defects, response times, and client sentiment.

Presales and proposal generation: fastest ROI zone

In many agencies, top technical experts spend hours repeating proposal writing work. AI can generate a strong first proposal draft in minutes if guided by internal standards.

High-impact automations

- Proposal draft generation from incoming brief and project type.

- MVP vs phase-2 scope suggestions with assumptions.

- Risk and dependency summary for delivery planning.

- Preliminary effort matrix with confidence level.

- Language compliance checks against sales policy.

Presales implementation checklist

- Define 3–5 recurring project archetypes.

- Create mandatory proposal section templates.

- Introduce qualification questions that block incomplete inputs.

- Require review by Tech Lead and Account owner.

- Track time-to-proposal and conversion impact.

Discovery and delivery planning

The handoff from client expectation to executable backlog is where many projects lose clarity. AI can accelerate documentation while keeping product decisions in human hands.

Practical use cases

- Transform workshop notes into user stories and acceptance criteria.

- Suggest epic decomposition into implementation-ready items.

- Detect requirement gaps (permissions, edge cases, auditability).

- Generate sprint plan draft with risk markers.

Critical rule: Product Owner and Tech Lead validate final scope and priority.

Engineering workflows: augmentation, not autopilot

In development teams, AI is most effective as a coding and review assistant. It accelerates repetitive tasks but should not own architecture or release decisions.

What to automate safely

- Boilerplate and repetitive module scaffolding.

- Unit test draft generation for existing code.

- PR pre-review summaries (risk spots, missing test coverage, smells).

- Change summaries for PMs and clients.

- Technical documentation from commits and pull requests.

Non-negotiable boundaries

- No auto-merge of AI-generated code without senior review.

- No external model upload of client code without policy approval.

- Mandatory security and license scanning in CI pipelines.

QA transformation: from late-stage testing to continuous quality

If QA enters only at the end, automation will only make issue detection faster, not quality better. Shift-left discipline is required.

QA automations with strong ROI

- Generate test scenarios from stories and acceptance criteria.

- Prioritize regression suites by impacted modules and risk signals.

- Classify defects and identify duplicates automatically.

- Suggest negative test cases for security and permission flows.

- Produce test-run summaries with impact context.

Minimum QA standard

Every delivery item should include explicit acceptance criteria, happy-path test, and edge-case coverage before release review.

Client reporting automation that drives decisions

Clients rarely need long decks. They need operational clarity: what was delivered, what is blocked, what decisions are needed, and what risks are emerging. AI can draft weekly reports from Jira, Git, and team communication data.

Recommended report structure

- Sprint outcomes: done, in-progress, blocked.

- Top risks and dependency decisions.

- Quality indicators: critical defects, release stability trend.

- Budget and capacity utilization snapshot.

- Next-week execution plan.

This moves PM work from manual status assembly to higher-value stakeholder communication.

Internal knowledge linking and process reuse

One hidden cost in service organizations is repeated reinvention. Teams solve similar problems in isolation. Mature software house automation includes reusable knowledge workflows:

- playbooks for presales, discovery, QA, and release governance,

- tagged case libraries of architecture and delivery decisions,

- semantic linking to relevant historical implementations during project kickoff.

This is where software house automation becomes compounding, not one-off.

Governance: prevent tool sprawl and compliance risk

Without governance, each team member adopts different models, prompts, and quality expectations. Output quality diverges and legal exposure increases.

Baseline governance policy

- Approved tools and model registry.

- Data classification: what can and cannot be processed externally.

- Mandatory human approval before client-facing communication.

- Standard prompt patterns by function (presales, QA, reporting, docs).

- Audit trail for generation, edits, and approvals.

30/60/90-day rollout plan

Days 1–30: foundation

- Map current process bottlenecks.

- Select two pilot areas with high repetition.

- Define baseline metrics and quality gates.

Days 31–60: pilot execution

- Launch automations in presales and reporting.

- Train team leads and set weekly retrospectives.

- Measure quality and cycle-time impact.

Days 61–90: scale

- Expand to discovery and QA workflows.

- Standardize cross-project operating procedures.

- Publish internal AI playbook and ownership model.

Common implementation mistakes

- Starting from tools instead of process outcomes.

- No executive owner accountable for adoption.

- No baseline metrics, only subjective impressions.

- No enablement program for team confidence.

- Automating critical decisions without review controls.

Operational KPI catalog for automation programs

Without measurement, automation quickly becomes a belief system. Define a focused KPI set early and review trends weekly. You do not need dozens of metrics; you need a consistent decision dashboard.

- Proposal lead time: from brief intake to approved client version.

- Story cycle time: from accepted requirement to deployed value.

- Defect escape rate: issues discovered after production release.

- Rework ratio: percentage of work returned for changes.

- Reporting preparation time: PM effort required for weekly status.

- Response SLA: average time to unblock client-critical issues.

Track these as trends, not isolated snapshots. Trend movement determines whether automation improvements are stable.

Role redesign after AI adoption

The most damaging message in AI rollout is “the tool will do your job.” The useful message is “the tool handles repetitive preparation, while humans own judgment and accountability.”

Example responsibility matrix

- Account/Presales: confirms client context and approves final proposal.

- Tech Lead: validates architecture realism and delivery risk.

- PM/Delivery Lead: orchestrates workflow consistency and handoffs.

- QA Lead: controls test depth and release quality gates.

- AI Process Owner: maintains prompts, templates, and KPI reviews.

Clear ownership prevents ambiguity around AI-generated outputs and keeps accountability human-centered.

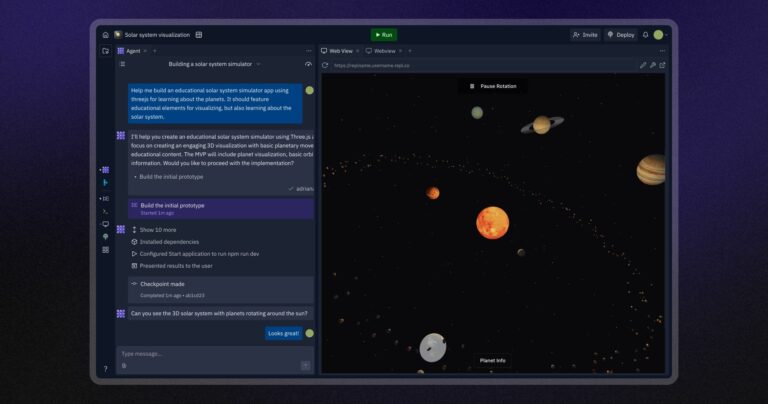

Technical blueprint: start simple and reliable

Most teams do not need a complex AI platform in phase one. A lightweight architecture is enough to deliver value:

- Data sources: CRM, Jira, Git, internal docs, communication logs.

- Integration layer: webhooks and scheduled jobs.

- AI runtime: primary model plus fallback strategy.

- Rule engine: validation checks and policy constraints.

- Review interface: explicit approval actions by role.

- Monitoring: latency, failure rate, revision history.

In practice, data quality and review flow matter more than model novelty.

How to design production-grade prompts

Prompts should be managed as process assets, not personal hacks. Version them like code and attach measurable quality criteria.

Prompt template for repeatable operations

- Objective: expected output and business user.

- Context: source data and constraints.

- Boundaries: mandatory rules and forbidden content.

- Output format: JSON/HTML/Markdown contract.

- Quality checks: criteria used during review.

This significantly reduces variability and review overhead across teams.

Change management and adoption discipline

Resistance to AI is usually organizational, not technical. Teams need a transition narrative and practical support.

- Explain which low-value tasks are removed.

- Map which higher-value skills become more important.

- Train on real internal examples, not generic demos.

- Publish weekly success cases with measurable impact.

- Reward process improvement behavior, not only volume output.

When teams see concrete gains, adoption becomes pull-driven rather than forced.

Cost governance for AI usage

Token and API costs can grow quickly without policy. Introduce financial governance from day one.

- Set monthly budget envelopes per team/function.

- Route simple tasks to lower-cost models.

- Cache stable outputs where possible.

- Measure cost per process (e.g., proposal generation cost).

Cost visibility turns AI discussions into informed operating decisions instead of abstract enthusiasm.

Mini case: 12-week impact snapshot

A mid-sized software house (around 45 people) started with two pilot workflows: proposal drafting and weekly reporting. Before rollout, proposal preparation consumed roughly 9–12 hours across multiple roles. After 12 weeks, with mandatory lead review still in place, average time dropped to 3–4 hours while consistency improved through standardized assumptions and risk sections.

In reporting, PM effort decreased from about 2.5 hours to 40–50 minutes per week. The result was not merely speed. Report quality improved because decisions, blockers, and delivery risks were clearly structured for client review.

- Proposal lead time: -58%.

- Weekly reporting effort: -67%.

- Proposal revision rounds: -31%.

- Quarterly client satisfaction: +18%.

Main lesson: gains came from process design, quality input data, and explicit approval ownership. AI amplified discipline; it did not replace it.

Conclusion

AI automation in a software house is not a side experiment. It is an operating model upgrade that can improve margin, quality, and predictability when implemented with clear boundaries. Start with measurable, repetitive workflows such as proposals and reporting. Then scale to discovery and QA once governance is stable.

With a process-first approach, software house automation shifts from “interesting initiative” to daily execution standard.

FAQ

Will AI remove developer and QA roles?

Typically no. It changes role composition: less repetitive production, more ownership of quality and decision-making.

Where should smaller teams begin?

Start with one repetitive process (proposal drafts or weekly reports), measure gains, then expand.

How do we prove business value?

Track lead time, revision counts, escaped defects, and client satisfaction before and after implementation.

Read also: Lead automation: form, scoring, CRM and follow-up in 24h