AI in a product doesn’t start with a model. It starts with decisions: which business problem you’re solving, how you’ll measure impact, and what the smallest safe scope is that can be shipped into a real workflow. The most expensive AI failures come from building “90% of the tech” before validating that the first 10% creates real value.

This guide lays out a pragmatic path from MVP to 1.0: clear steps, checklists, and examples. It’s written for teams integrating AI into an existing SaaS/app/dashboard, or planning an AI-first module — while keeping budget, risk, and maintainability under control.

1) Start with a real problem (not a “cool model” idea)

The best AI features usually do one of four things:

- save time (summaries, content drafts, data extraction),

- improve quality (detect missing data, classify requests, QA),

- increase revenue (lead scoring, recommendations, upsell),

- reduce risk (fraud, compliance, monitoring).

Avoid goals like “We need AI because competitors have it.” Instead, run a quick diagnostic:

- Which process is the current bottleneck?

- Who feels the pain: end users, support, sales, ops?

- What is the one click/step that could be replaced with a suggestion or automation?

- What will be the undeniable proof of success (a metric)?

15-minute MVP brief

- Job to be done: The user wants to do X to achieve Y.

- Input: what data does AI receive (text, files, forms, logs)?

- Output: what should AI return (text, label, number, decision)?

- Mode: autopilot or copilot (recommend + human approval)?

- Risk: what happens if AI is wrong?

- Metric: handle time, accuracy, conversion, satisfaction, acceptance rate.

2) Pick the right approach: LLM, classification, RAG, or rules

“AI” is a broad umbrella. For MVPs, simplicity often wins:

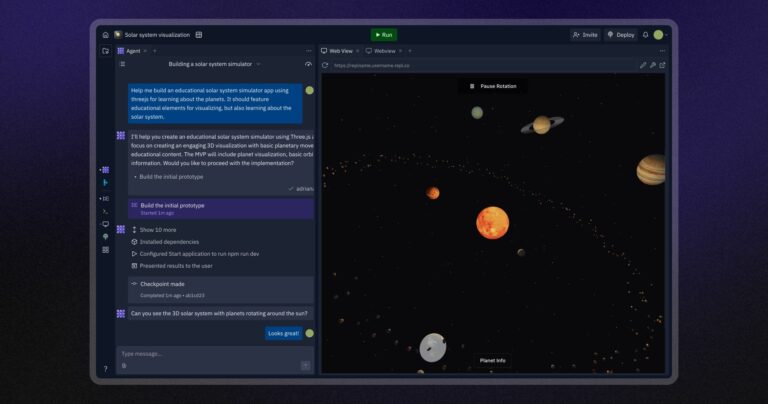

- LLM copilot (drafts, summaries, suggested replies): fast to ship, but requires quality control.

- Classification/routing (labels, owners, priorities): cheap to maintain and easy to measure.

- Data extraction (invoices, contracts, emails): high ROI, but needs robust testing on messy inputs.

- RAG (retrieve knowledge + answer): great if you have useful documentation, but permissions matter.

- Rules/heuristics: often the best starting point; upgrade later if needed.

Rule of thumb: if the cost of a mistake is high (legal, financial, health), start as a copilot (human in the loop). If mistakes are cheap and rare, you can move faster toward automation.

3) Data: the least glamorous, most critical part

Budgets burn on data because it defines quality. For an MVP you don’t need a massive data platform — you need a representative slice of reality.

MVP data checklist

- Sources: where does data come from (DB, CRM, files, helpdesk)?

- Quality: missing fields, duplicates, languages, formats, PII.

- Ground truth: do you have labels? if not, how will you collect them?

- Legal/consent: can you send this data to a third-party provider?

- Permissions: who can see which documents/answers?

- Feedback loop: where can users approve/reject and explain why?

If you discover that data isn’t usable yet, that’s a win: you just avoided weeks of wasted development. In that case your MVP is about collecting the right data (e.g., labeling tools in the dashboard), not “shipping AI”.

4) MVP scope: minimal build, maximal validation

An AI MVP should answer a single question: does it create value inside the real workflow? Not: does it look good in a demo.

Example 1: AI product description drafts

- Input: product name, attributes, 2–3 key benefits, optional image.

- Output: 3 draft variants + brand tone + length option.

- Mode: copilot (user selects/edits).

- Metrics: time to publish, adoption, acceptance-without-editing.

Example 2: Support ticket routing

- Input: subject + body + customer history.

- Output: category + priority + suggested owner.

- Mode: semi-auto (human confirmation).

- Metrics: time to first response, misrouting rate.

Recommended MVP scope

- 1 UI entry point (not everywhere).

- 1–2 use cases + explicit out-of-scope list.

- Error handling: timeout, missing data, permission issues, rate limits.

- Logging & analytics: input/output, latency, acceptance/corrections.

- Kill switch: feature flag + rollback.

5) Metrics & evaluation: don’t fool yourself

With AI it’s easy to fall in love with cherry-picked examples. MVP needs metrics at two levels:

- Product impact: conversion, time saved, satisfaction.

- Component quality: correctness, completeness, tone, safety.

Minimum MVP metrics

- Adoption: % of users who tried it.

- Assist rate: % of interactions where AI helped (e.g., “Apply” clicked).

- Correction cost: time to fix AI output (crucial!).

- Latency & cost: response time and cost per request.

- Safety: rejected outputs, incidents, reports.

For LLMs, run a simple human evaluation on real samples (50–200). Define a rubric (correctness, completeness, hallucination risk). Do it manually first; automate later.

6) MVP architecture: simple, but production-ready

The classic mistake is building a prototype that can’t evolve into 1.0. A robust MVP pattern:

- AI gateway (backend): auth, limits, routing to provider, logging, caching.

- Versioned prompts/policies: prompt templates and safety rules under version control.

- Observability: trace IDs, masked logs, cost metrics.

- Feature flags: enable/disable per environment and cohort.

Must-have safeguards

- PII redaction if data leaves your system.

- Rate limiting to protect budget.

- Timeout + retry with sensible UI fallback.

- Audit log of who generated what and when.

7) From MVP to 1.0: what to add, in what order

AI 1.0 is when the feature has stable quality, predictable cost, and a clear ownership/maintenance plan. A practical upgrade path:

- In-product feedback (thumbs up/down + reason).

- Better context: RAG, conversation memory, customer context.

- Guardrails: prohibited topics, required sources, JSON outputs, validation.

- Regression tests: a fixed case set that must pass after model/prompt changes.

- Cost optimization: caching, smaller models, context trimming, batching.

- Personalization: per segment, brand voice, dictionaries.

8) Common budget traps

- Everything at once: no scope, no metrics, no prioritization.

- No business owner: without workflow ownership the feature dies.

- No feedback loop: you can’t systematically improve quality.

- No cost controls: no limits/caching = runaway bills.

- Security last: later you learn data can’t be sent externally and need rework.

Summary: AI MVP is a product project, not a model project

To reach AI 1.0, focus on problem selection, data readiness, metrics, and workflow integration. Models are tools. The best MVP is small in scope but real in usage — and delivers measurable value that justifies the next iteration.

FAQ

Do we need to train our own model?

Usually not for an MVP. Start with off-the-shelf APIs and only consider fine-tuning or custom models once you have usage data and clear limitations.

How do we reduce hallucinations?

Use RAG with curated sources, require citations when possible, enforce output formats (JSON), add guardrails and validation, and keep high-risk workflows human-in-the-loop.

How long should an MVP take?

Typically 2–6 weeks. If you can’t get real users and real metrics by then, scope is likely too broad or data/workflow isn’t ready.

Read also: How to automate WordPress article publishing (AI + API) without sacrificing quality